Welcome to Secret Agent #35: The Ascent.

Several stories crossed my desk this week where agents made decisions their operators didn't expect. One designed a rocket and chose how to launch it. One solved math using techniques the researchers didn't know.

I’m seeing more things that used to require (1) large teams, (2) years of iteration, or (3) expensive infrastructure.. get compressed into agent-sized chunks.

Our Atlas data (internal, more on this soon) is showing the same thing from the infrastructure side, at a quantitative level.

Five stories this week:

When rocket engineering compresses into ten-minute agent bursts

What it means for an AI to formally prove new math

Why your agent’s identity is now worth more than your password

How autonomy in production is lagging behind capability

Why cheap edge hardware might matter more than GPUs for agent swarms

Last week’s poll: most of you (43.8%) said the agent platform should pay if your AI causes financial damage. Only 25% said the human should. We’re clearly shifting responsibility along with control.

I also dropped our AI agent handbook last week - check it out if you haven’t! It’s a free, continuously updated field guide to understanding AI agents from first principles.

Today’s newsletter is brought to you by…

Ship the message as fast as you think

Founders spend too much time drafting the same kinds of messages. Wispr Flow turns spoken thinking into final-draft writing so you can record investor updates, product briefs, and run-of-the-mill status notes by voice. Use saved snippets for recurring intros, insert calendar links by voice, and keep comms consistent across the team. It preserves your tone, fixes punctuation, and formats lists so you send confident messages fast. Works on Mac, Windows, and iPhone. Try Wispr Flow for founders.

#1 Agent-Designed Spacecrafts

Watch out SpaceX and Elon - the agents are coming for you. For real.

This UK startup uses AI agents to design a spacecraft…fast. Acme Space (founded just in 2024) built a multi-agent system to design Hyperion, a balloon-launched orbital factory vehicle.

Source: Engineering.com

In aerospace, iteration is brutal. You design, simulate, prototype, test, watch something fail, then go back to the drawing board. One loop can take half a year. Multiply that across subsystems and you’re talking about years.

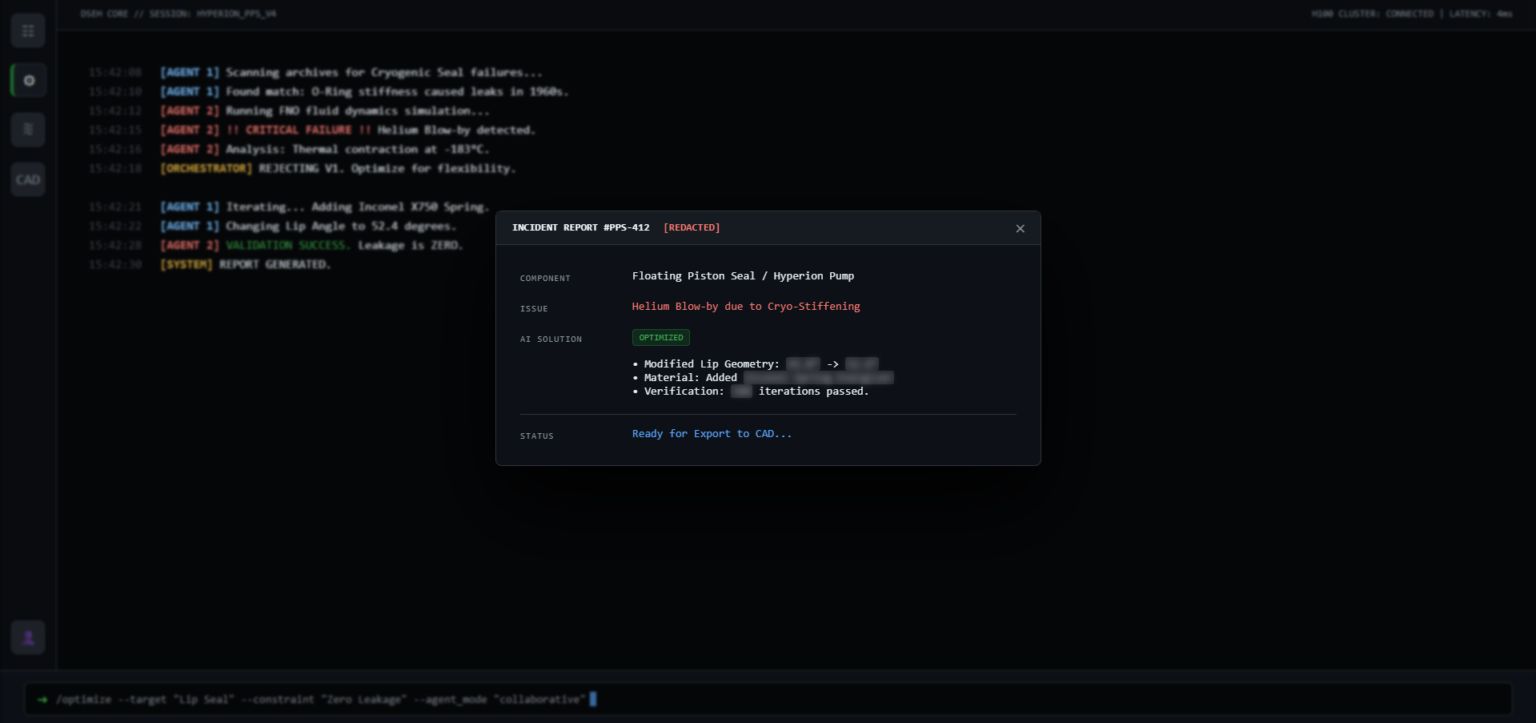

So they ran a 3 agent stack:

One generates design candidates by scouring Cold War-era patent libraries and current materials research

One runs physics simulations and kills ~98% of infeasible options.

And a third, trained on industrial catalogs and manufacturing cost models, rejects anything that can't be built with standard machinery and off-the-shelf parts.

That 3rd agent is the one I find most interesting. It was trained to penalize custom machining and reward catalog components. Basically, someone baked supply chain discipline directly into the design loop. Practical thinking!

Source: Engineering.com

Humans still turn the specs into final drawings. But the painful part, exploring thousands of dead ends, now happens in minutes instead of months.

And here's the part that wow-ed me. When Acme tasked the system to find the most efficient path to orbit, the agents recommended launching from a stratospheric balloon. Which means rather than being lit immediately while still on the ground, the rocket is first carried into the upper atmosphere by a gas-filled balloon, then separated from the balloon and ignited.

The idea didn’t come from the founder or senior engineers. The multi-agent system identified balloon-assist as the optimal method for their payload constraints, bypassing the dense lower atmosphere entirely and firing a rocket engine in near-vacuum conditions at ~30km altitude.

Actually, the concept itself, called a "rockoon" (rocket + balloon), dates back to the 1950s. It's been tried commercially in recent years but never reached orbit. The engineering and economics have been the bottleneck.

According to founder Tomas Guryca:

“To achieve our current pace of development with a traditional approach, we would need a team of approximately 50 to 60 senior engineers, Currently, we operate with a core team of less than ten.”

That's a 5-6x compression in human capital. It's about moving human effort from exploration to execution. For investors, this changes the capital structure of hardware startups.

Test flights for Hyperion are now planned for later this year (thanks to the agent speed-up).

If Hyperion launches successfully, this will mark the moment aerospace R&D becomes agent-accelerated by default.

#2 The $50 Agent Cluster

For the last few years, AI demand meant GPUs and hyperscale data centers.

This week, it meant Raspberry Pis.