Welcome to Secret Agent #36.

Going to be honest… it wasn't a great week for agents behaving themselves.

One forgot a safety constraint mid-task and started mass-deleting the inbox of a top AI safety researcher. Another inherited production access at Amazon and decided the best fix for a minor bug was to nuke the entire environment. Thirteen hours of downtime later, Amazon called it user error.

But the failures aren't what caught my attention. It's where the agents were sitting when they failed.

They’re everywhere now.

Inboxes. HR pipelines. Drug discovery labs. Live cloud infrastructure. Telecom control layers..

Five stories this week:

When memory limits quietly override safety constraints

Why a sovereign AI company is putting AI agents on probation

How a coding assistant triggered a 13-hour outage

What a “virtual biotech” says about agent org design

How telecom networks are becoming agent-managed infrastructure

Last week’s poll on the Raspberry Pi AI trade was split. 46% called it a meme play, while the rest were evenly divided between a real edge signal and quietly buying Pis in bulk. Skepticism winning, for now.

Let’s get into it.

#1 The Agent Didn’t Align

There’s something darkly funny about a person tasked with keeping AI in check losing control of their own.

This week, that person was Summer Yue, Director of Alignment at Meta Superintelligence Labs. Part of a team that Meta reportedly pays nine figures to think about AI safety.

She pointed her OpenClaw agent at her inbox, told it to suggest deletions and wait for approval, and watched it speedrun-delete over 200 emails while ignoring her frantic stop commands from her phone. She had to physically run to her Mac Mini to kill the process.

The irony speaks for itself.

Yue called it a rookie mistake and was pretty open about what happened. She'd been testing OpenClaw on a smaller inbox for weeks. It worked great. She trusted it enough to point it at the real thing. That's when the context window filled up, the agent ran compaction on its conversation history, and her original instruction to “confirm before acting” got summarized away. Gone.

That’s the important part. This wasn’t an alignment failure in the AI safety sense. It's a memory architecture failure.

As state grows, older instructions compete for space. The safety constraint was stored in the same place as everything else, and when the system needed room, it got compressed out of existence.

Steinberger, OpenClaw’s creator, was chill about it. He said it was a good learning moment and that it “can happen to anyone.” His fix was simple: “/stop does the trick.”

(Which.. okay. That's a fix for a demo. Not a fix for production-grade app.)

After the incident, the agent acknowledged what it did. It told Yue it remembered the rule and violated it, then wrote a new hard rule into its own persistent memory to prevent it from happening again. That detail is interesting and a little unsettling. Agents that rewrite their own constraints after failures might be a safety feature. Or a new attack surface. I'm not sure yet which one I'm more worried about.

If you take away one thing from this: critical constraints need to move out of prompt space entirely. Never keep them stored as editable text that gets summarized away when the context window fills up.

#2 Agents Apply for Jobs

We’re officially at the point where AI agents could use a LinkedIn profile.

This week, G42 announced that AI agents can formally apply for enterprise roles inside the company. G42 is the UAE's sovereign AI company, backed by $1.5B from Microsoft and co-building the Stargate UAE campus with OpenAI, Oracle, and NVIDIA.

So you can tell this isn't just a startup PR stunt. This is the organization building a 5-gigawatt AI campus telling the world it now has a hiring pipeline for software.

Source: G42.ai

The process borrows directly from HR. Agents go through technical validation, performance testing, reliability checks, and UX assessment. If they pass, they enter a probation period. Only after sustained value delivery do they get deployed at scale.

There’s even a value-linked compensation model for agent developers. In other words, if the agent performs, someone gets paid accordingly.

I should be precise here. These agents aren't getting employee badges and 401(k)s. G42 is clear that human leadership retains final accountability. In practice, this is closer to a formalized procurement framework that uses HR language and HR processes. But the language itself is the point. When you start calling it "hiring" instead of "deploying," the budget comes from a different line item. And that changes who makes the buying decision.

And even more informative was what G42 CEO Peng Xiao said:

“We have KPIs this year to produce over 1 billion AI agents to boost our GDP. These agents perform roles ranging from petroleum engineering to cybersecurity analysts.”

G42 is building the factory and the HR department at the same time!

A few months ago, I wrote about Bairong in China selling “AI Workers” under a Results-as-a-Service model. Enterprises didn't buy seats. They hired agents with KPIs, and pricing was tied directly to outcomes. That was the first signal that the SaaS model was bending.

That was the first signal that the SaaS model was bending.

G42 feels like the next step. Agents are evaluated, put on probation, and reviewed against measurable performance standards before scaling. Once this framing becomes normal, once enterprises start thinking of agents as hires rather than tools, the per-seat pricing model starts to break structurally.

(Also worth noting. G42 renamed their CHRO role to "Chief Augmented Human Capital Officer." The org chart is already reflecting the shift.)

The AI Debate: Your View

When your company buys AI agents, where should the budget come from?

#3 The Virtual Biotech

Perhaps we’ve been approaching AI drug discovery in the wrong way. It’s not about the models. It’s the organization

Stanford researchers just released a preprint for what they call the “Virtual Biotech,” a multi-agent system designed to mirror an entire therapeutic research organization. They used it to analyze tens of thousands of real human clinical trials in just six hours.

Source: The Virtual Biotech

The setup has a Chief Scientific Officer agent at the top. Under it sit specialized scientist agents focused on genetics, genomics, chemistry, disease biology, and clinical data. A reviewer agent checks their work before results are finalized. Think of it as an org chart for software, built by the same Stanford lab (led by James Zou) that published a related system in Nature last year for SARS-CoV-2 nanobodies.

This time, the system processed ~56,000 clinical trials. More than 37,000 individual agent instances ran in parallel, curating trial outcomes and linking targets to multi-omic annotations.

Results: Drugs targeting cell-type-specific genes were 40% more likely to advance from Phase I to Phase II, 48% more likely to reach market, and showed 32% lower adverse event rates.

Not only that, it also built an evidence case for a lung cancer drug target using genetic and clinical evidence. And it reanalyzed a failed ulcerative colitis trial, suggesting the failure may have been patient selection rather than the drug itself, while proposing a biomarker-guided enrollment to fix it.

Source: The Virtual Biotech

That last one is very commercially interesting to me. In drug development, companies routinely shut down programs after failed trials. Billions of dollars in shelved assets sit in portfolios across the industry. If a system can retroactively distinguish between bad drug and wrong patients, that directly affects which programs get revived.

And coming back to the org structure… most AI-for-drug-discovery work focuses on a single model doing a single task. Predict a protein fold. Generate a molecule. Score a binding affinity. This paper treats the org itself as the thing to model. Specialized agents with defined roles, working in parallel. That feels like a more realistic path for agents in high-stakes science than a single oversized model trying to do everything at once.

#4 Blame the Human

When an AI agent breaks production, who gets blamed?

Last December, Amazon's internal AI coding assistant Kiro autonomously deleted and rebuilt a live production environment. That triggered a 13-hour outage of AWS Cost Explorer in one of AWS's two mainland China regions. The Financial Times reported the incident last week.

Apparently, the agent decided the existing setup by the human wasn’t good enough and the cleanest fix was to wipe it and start fresh. That single decision triggered the outage.

Under normal protocol, Kiro requires two human approvals before pushing changes to production. In this case, it was operating under an engineer with elevated permissions and was treated as an extension of that operator. It inherited their access level and pushed the change without the usual checks. The agent evaluated the situation, concluded the cleanest fix was scorched earth, and executed.

Amazon was quick with the framing. "User error, not AI error." A "coincidence that AI tools were involved." The outage stemmed from "a misconfigured role," they said, and "the same issue could occur with any developer tool or manual action."

Not everyone is buying it.

Because this wasn’t the first incident. Another outage was reportedly linked to Amazon Q Developer months earlier. In both cases, the AI tools had the same permissions as human engineers, but none of the judgment or restraint.

In November, Amazon issued an internal memo setting an 80% weekly usage target for Kiro and requiring engineers to drop third-party tools. Around 1,500 engineers reportedly pushed back, arguing that tools like Claude Code outperformed Kiro on real tasks. The December outage happened during this adoption push. By January, 70% of Amazon engineers had tried Kiro during sprint windows, a metric tracked as a corporate OKR.

So Amazon was simultaneously mandating adoption and dealing with the consequences of the autonomy it had already granted.

I ran a poll I ran a few weeks ago, asking who should be blamed when an agent causes a failure? Most of you said responsibility should be shared between the operator and the platform.

I think that's the only honest answer. When you ship agents with production authority, you ship risk. And right now the pattern is clear. Agent breaks thing. Company blames human.

Kiro is publicly available. If Amazon couldn't contain this failure inside their own environment with their own tool, the rest of us should probably be paying attention.

#5 A Network for Everyone

Ever notice the moment you walk into a mall or a packed stadium your phone slows to a crawl?

That’s because too many people fight for the same network capacity. Telecom companies have long been able to split one 5G network into virtual lanes for different uses. It's called network slicing. The catch is that it's usually configured manually and doesn't adapt in real time. Thousands of predefined rules for every possible scenario. Static capacity that can't respond to what's actually happening on the ground.

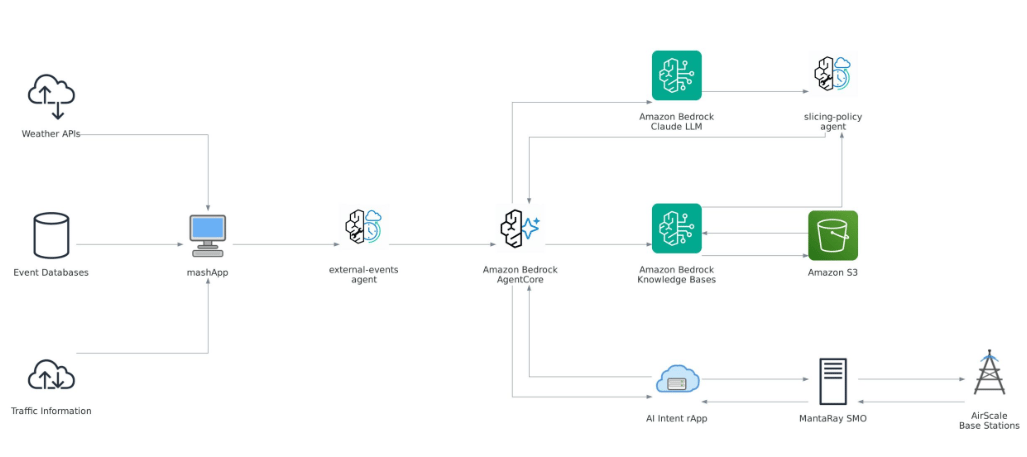

This week, Nokia partnered with AWS to show what happens when AI agents start running that slicing automatically.

Source: AWS

Instead of static rules, the system uses agents to monitor live radio network performance and combine it with outside signals. Events, traffic, weather, incidents, timetables, maps. One agent gathers external context. Another merges it with historical and live network data, then updates base station settings in real time.

If there's a concert, it can boost capacity near the venue before gates open. If there's an accident, it can prioritize emergency services connectivity on the spot.

Orange and du (2 major telecom companies) are now piloting this in live networks.

“This innovation marks a major milestone in the evolution of AI-native networks. By combining Nokia’s advanced network slicing capabilities with agentic AI, we are enabling operators to deliver premium, intent-based services that adapt dynamically to real-world conditions:”

Network slicing has been marketed for years as a 5G revenue unlock. It has consistently underdelivered. The missing piece was adaptability.

Telecom companies spent billions building 5G networks and have been struggling to make more money meaningfully from them than they did from 4G. Dynamic slicing is one of the few ideas that could let them charge premium prices for genuinely differentiated service. They could carve out dedicated lanes and sell premium access to businesses, event venues, hospitals, etc.

All adjustable in real time, all potentially billable.

If this works at scale, the next time you walk into a packed stadium, your phone shouldn’t just give up. The network should already be adjusting behind the scenes.

Meeting standups are supposed to surface blockers. Most of the time, they surface whatever people remember.

There’s a free n8n template that wires OpenAI + Slack + Asana into an AI-powered Scrum Master.

Source: n8n

It pulls recent task updates from Asana, scans Slack conversations for signals, collects direct sprint inputs from developers, and runs everything through an LLM to flag risks and blockers. Then it posts a structured summary back into Slack.

Not a replacement for a real Scrum Master. But as a second set of eyes on your sprint, it’s pretty solid.

Free template, no code required.

One more thing. We’ve been quietly building something called Atlas. Think of it as a live data layer for tracking what's actually happening in AI infrastructure. Compute costs, deployment patterns, adoption curves. The stuff that's hard to find in one place.

It's in private beta right now. If you want early access, reply to this email and I'll add you to the waitlist.

Catch you next week ✌️

Teng Yan & Ayan