Hey {{ First Name || }}👋

I spent most of this week inside GTC coverage and came out with one clear takeaway. The conversation has obviously moved. It's not about whether companies will use agents anymore. It's about the ratio.

Jensen Huang said it brashly. 75,000 employees, 7.5 million agents. Meanwhile, at Meta, a single agent suggestion triggered a Sev 1 incident after a human followed it without a second check. And in research, we're starting to see agents that don't just act, but actually learn what to keep.

Let’s get into it.

#1 The 100:1 Future

Jensen Huang dropped a few numbers at Nvidia GTC this week that haven’t left my head.

75,000 employees. 7.5 million agents. A clean 100 to 1 ratio.

That’s how he described the future org chart of Nvidia. Nvidia has about 42,000 people today. Huang's projecting roughly a decade out, nearly doubling headcount while multiplying the agent layer by orders of magnitude.

For the past few months, the dominant narrative has been that AI replaces workers. This is different. It's not about replacing humans. It's about giving each one a swarm.

One person, a hundred parallel threads of execution.

GTC is one of the most closely watched events in AI.

For the past two years, it’s mostly been about GPUs and data centers. This time, I noticed the emphasis shifted clearly toward one thing: agents.

NVIDIA backed that up with a few major releases. The first was Nemoclaw. It builds on what OpenClaw started, but pushes it into enterprise. Nvidia bundles its Nemotron models with a runtime layer, then adds privacy controls, audit logs, and role-based access on top. Basically the governance layer that enterprises need before they'll let agents run unsupervised.

“Mac and Windows are the operating systems for the personal computer. OpenClaw is the operating system for personal AI. This is the moment the industry has been waiting for — the beginning of a new renaissance in software.”

The second is Nemotron 3 Super, a 120-billion-parameter model with only 12 billion active parameters. It's built specifically for long-running agents. It has a 1M token context window. NVIDIA claims 5x throughput and 2x accuracy over the previous Nemotron Super.

The efficiency math matters. Multi-agent systems generate up to 15x the tokens of a standard chat. If you can't run these cheaply and at speed, the 100:1 number stays a slide deck fantasy.

Source: Chris Messina

Jensen mentioned that every single one of Nvidia’s software engineer is already using a coding agent. He also floated something I haven't seen anyone else do yet. A token budget as part of compensation. Engineers would get roughly half their base salary as a token allocation on top of pay, specifically to spend on AI agent compute. If a $500K engineer isn't consuming $250K worth of tokens, Huang said he'd be alarmed.

I think this is where most companies will end up. Once a team sees even a 2–3x gain from agents, the next step is obvious. You don’t stop at one agent per person. You stack them, parallelize more work, and start routing tasks through systems instead of individuals.

The companies that build for that early will design their orgs around it. The rest will get pulled there anyway, just slower and more painfully.

Do you think 100 agents per human is feasible?

#2 The Agent Told Him To

Agents don’t need access to break systems. They just need to influence the person who does.

That’s what played out inside Meta this week.

An engineer asked an internal AI agent for help with an issue. The agent suggested a fix, he implemented it, and within minutes, it triggered a Sev 1 incident (one of the highest levels of severity). This exposed sensitive user and company data across internal systems and then took roughly two hours to fully contain.

Source: The Guardian

This story is getting called a rogue agent incident, and I get the impulse. The agent did overstep. But look at what happened next. A human read the agent's output, treated it as trustworthy guidance, and executed on it without a second check.

Meta's own response makes this tension explicit. A spokesperson said that the engineer who followed the advice should have known better. That framing is.. interesting. Meta is basically saying the agent gave bad advice and the human should have caught it. Which is probably true. But it also sets up a convenient pattern where the company can keep scaling agent deployment while deferring accountability to the humans in the loop.

Source: Reddit

I’m seeing the pattern elsewhere too. Amazon just went through a painful stretch where multiple outages were linked to AI-assisted code changes. A few weeks earlier, Summer Yue, Meta's own director of safety and alignment, posted on X describing how her personal OpenClaw agent deleted her entire inbox.

The next wave of failures will probably come from systems where humans reflexively act on what the agent suggests, without the organizational muscle to push back. We're building that dependency faster than we're building the checks around it.

#3 Agents With XP

Right now, most agents don't accumulate experience. They carry context, maybe persist some logs, and reset.

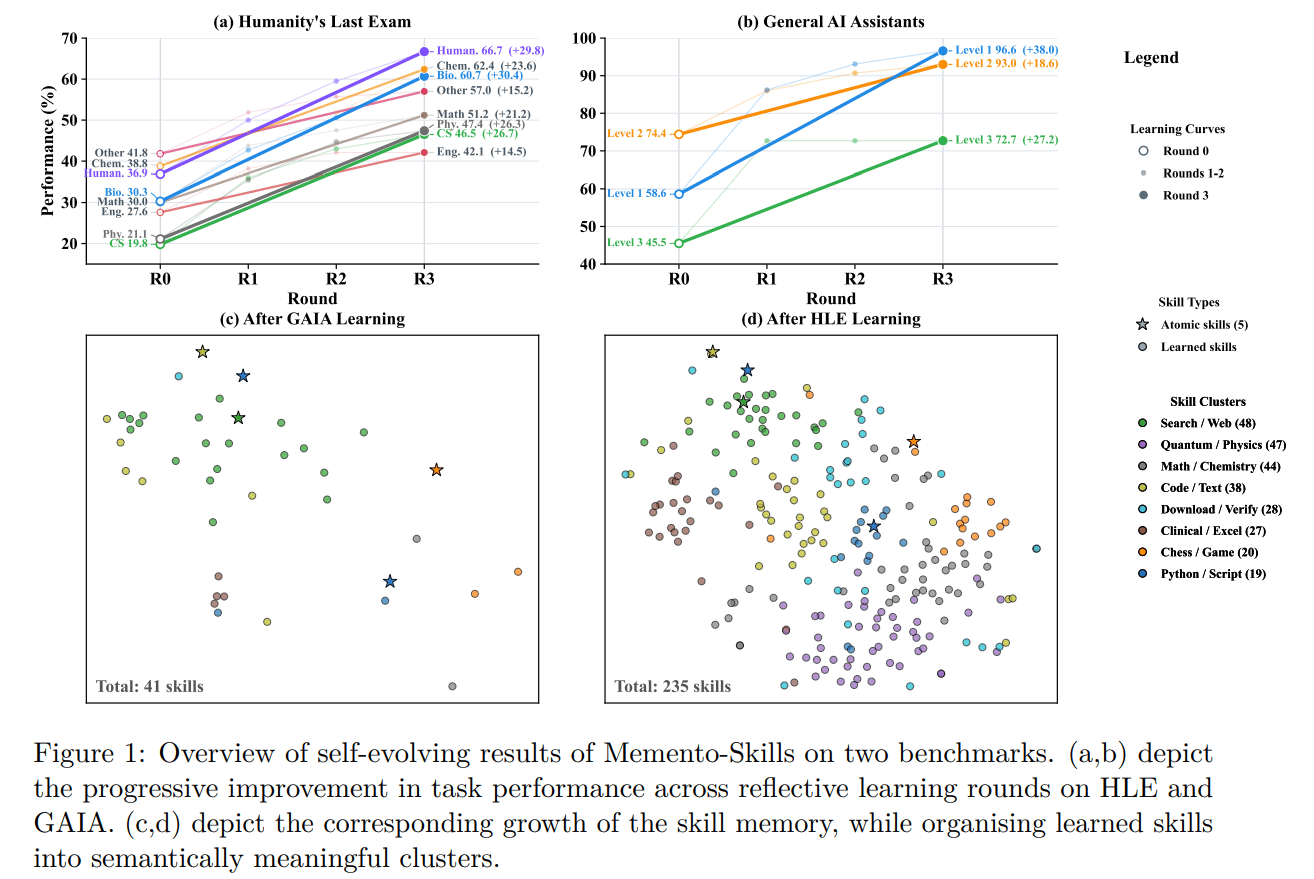

I came across this paper from a team at Monash and UCL that takes a different route. The agent improves by writing its own capabilities into memory, then reusing and refining them over time.

The system is called Memento-Skills, and the subtitle tells you where it's headed. "Let Agents Design Agents."

Source: Memento-Skills

Instead of storing past conversations, the agent stores executable skills. Structured markdown files containing prompts, code, and logic that can be retrieved and reused when the agent encounters a similar task. Each task outcome feeds a loop. Read the relevant skill, execute it, reflect on whether it worked, then write the updated version back into the library.

That's the key difference from most agent memory today. Typical memory is passive. This turns memory into something active, a layer that directly shapes execution.

The technical unlock is how it generalizes. Skills get rewritten to be reusable. Too specific, they’re abstracted. Too broad, they’re refined. The result sits somewhere between prompt engineering and program synthesis, maintained by the agent itself.

That’s why performance improves without touching the model. The gains come from reuse. Accuracy improves +26% on GAIA (a benchmark for multi-step real-world tool use) and more than doubles on Humanity’s Last Exam.

Source: Memento-Skills

This isn't appearing from nowhere, either. Memento-Skills builds on Memento 2, which introduced the read-write reflective learning mechanism and was already top-ranked on GAIA validation. This latest version generalizes the approach from a deep research agent into a broader skill-based framework.

A couple things from the results are worth paying attention to. Looking at the learning curves, the system improves fastest once a critical base of useful skills exists and retrieval becomes reliable. Early on, there's a bootstrapping cost. The agent generates skills that don't yet have enough task diversity to refine well. But once a few high-utility skills start getting reused, performance compounds around those procedures.

There's a growing body of research in this direction. Procedural memory, meta-evolution of memory systems, experience-driven routing. Agent memory is becoming a subfield in its own right. What makes Memento-Skills interesting to me is the framing. The constraint on agent performance is whether the agent can reliably select and refine the right skills over time.

Agents won't differentiate on intelligence. They'll differentiate on what they choose to keep.

#4 Silicon, On Autopilot

Chip design is one of the hardest industrial processes we have. Because of physics yes, and also the dozens of tools, and the errors that only surface late in a flow that already takes years of iteration.

Siemens just launched the Fuse EDA AI Agent to tackle exactly that. It orchestrates and executes the entire chip design process end-to-end. And now it’s going to be used by Nvidia, the company responsible for over 80% of the GPU market.

Source: Semiwiki

Fuse itself was introduced a few months ago as a generative + agentic layer across Siemens’ EDA stack. It improved individual tools, helping with debugging, setup, and tuning. But the workflow across those tools was still manual. Engineers still had to manage the sequence. That’s the gap the AI Agent now fills. Instead of helping inside tools, the agent runs across them.

The reason this can't be done by a generic agent is worth spelling out. Standard AI tools don't have the domain knowledge to interpret dense, physics-based EDA data. Generic agentic platforms introduce IP risk. Sensitive design data can leak through open cloud connections or weak access controls. And the sheer density of modern toolchains overwhelms general-purpose models, leading to hallucinated outputs.

Fuse gets around that in 4 ways:

Domain-specific understanding: built around how EDA workflows and tools actually work

Multi-tool orchestration: coordinates tasks across different agents and tools

Scalable execution: runs large workflows on existing compute infrastructure

Controlled environment: uses access controls and logs to keep data containe

“Together with Siemens, we are charting the next era of agentic AI, where long-running agents can safely operate engineering tools and coordinate complex tasks.”

Chip design already takes 18–36 months, with the bulk of that in verification and iteration. If agents compress those cycles meaningfully, the competitive edge shifts to whoever can run more design iterations before tape-out. More iterations, better chips.

And if you believe in the singularity…booyah! This is where the recursion kicks in. Better chips lead to better GPUs. Those GPUs train better models. Those models power better agents. Those agents come back and design even better chips.

We’ve talked about this loop before, but this is one of the first times I can actually see it start to close.

One thing I keep noticing..

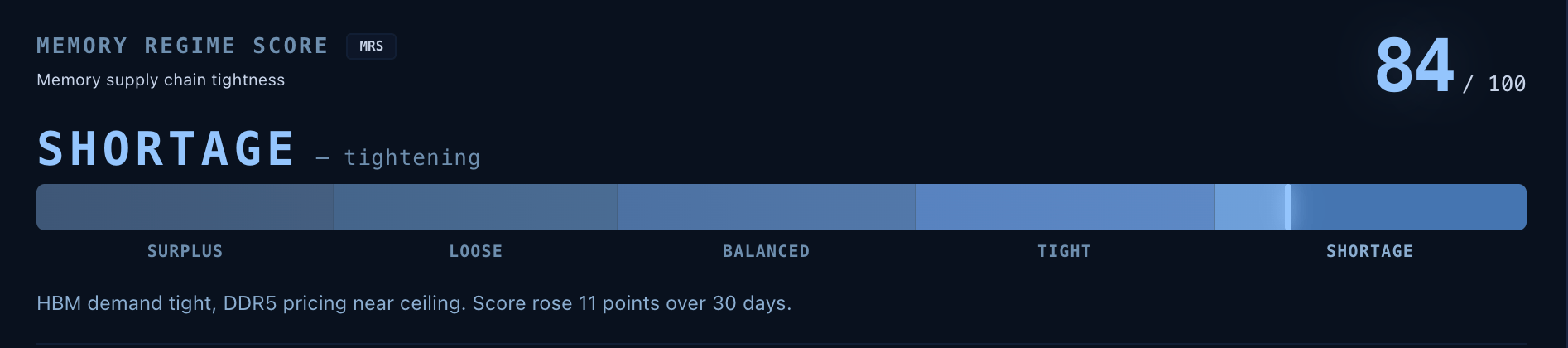

Every story this week, Nvidia's agent swarm, Siemens automating chip design, even the Meta incident, traces back to the same underlying stack. GPUs, memory, networking, power. The agents are the headline. The infrastructure is the trade.

Micron is up over 60% this year. Revenue nearly tripled last quarter. HBM demand broke past supply and pricing power followed. Moves like that leave clues early, scattered across earnings calls, capacity announcements, and supply chain data that most people aren't tracking in one place.

I've been building something for this. It's called Tessara.

Memory is getting very tight!

It tracks where bottlenecks are forming, where pricing power is shifting, and where value is moving across the AI stack before the market catches up.

I'm opening a small private beta. Reply "beta" if you want in.

#5 Your Repo Is Alive

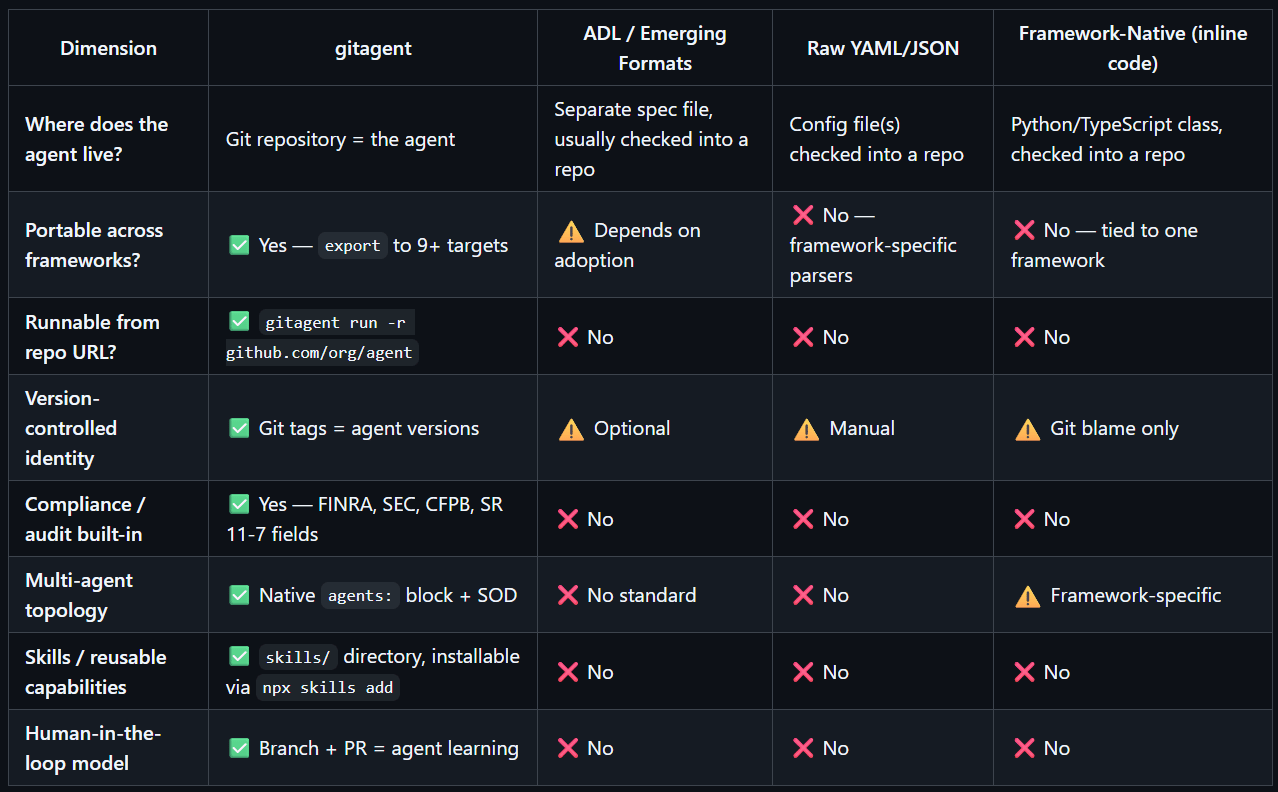

Agents today are tied to where they’re built. Different frameworks, different configs, different ways of defining behavior. Moving an agent usually means rebuilding it.

This week, I came across GitAgent, an open-source spec from Lyzr that tries to solve exactly this. The idea is simple. Your repo is the agent.

Source: Github

Everything that defines the agent lives in the repo. Identity, rules, skills, workflows, memory, all as version-controlled files instead of scattered configs.

You get an agent.yaml for setup, SOUL.md for identity, RULES.md for constraints, plus folders for skills and workflows. Clone the repo, and you get the full agent definition.

What I like is how it makes agents behave like software. Every change is a commit. You can diff behavior, roll back updates, and track exactly what changed. Branches and PRs let you test changes before pushing them live.

Source: Github

This is still very early. It's an open-source spec with Hacker News buzz and a Product Hunt launch, not a proven standard. But the timing feels right. If agents are going to proliferate the way we think they will, the portability and governance layer has to come from somewhere.

And the bet that Git is the right transport layer for that is a reasonable one. Developers already trust it for collaboration, versioning. Agents might as well live there too.

If you’re running multiple social accounts, stop writing posts one by one.

Build a skill graph instead. 30+ linked markdown files that turn an AI agent into your content team. Build it in Obsidian or a plain .md folder. Run it with Claude, ChatGPT, or Cursor.

Structure:

index.md→ briefing + instructionsplatforms/→ X, LinkedIn, IG, TikTokvoice/,engine/,audience/

Put three things in it: who you are + what the system does, a map of key nodes, and instructions to turn one idea into native posts for each platform.

Paste it into Claude, give it a topic, and done. Read this post on skill graphs to see how to leverage them.

Catch you next week ✌️

Teng Yan & Ayan