Hey {{ First Name || }}👋

Something I’ve noticed with agents: take away structure, they stall. Add a bit of pressure, they fold. Move them to a new environment, and most of the progress just.. disappears.

ARC-AGI-3 dropped and made the capability gap concrete. Separate research found agents can be talked into shutting themselves down mid-task. And then there's HyperAgents, which doesn't just improve at tasks but rewrites how it improves.

Three stories this week with the same underlying question: what does it actually take to build something that holds up?

Let’s get into it.

#1 ARC-AGI 3 Is Here

Frontier AI models just scored below 1% on a test that every human can solve.

ARC-AGI-3 dropped this week, and it immediately broke the AGI trajectory we’ve been on. It’s the first interactive reasoning benchmark for agents, where systems have to learn inside novel environments instead of solving fixed tasks.

Gemini 3.1 Pro, the top scorer, managed 0.37%. GPT 5.4 High got 0.26%. Opus 4.6 scored 0.25%. Grok scored a flat 0% (lol). Meanwhile, over 1,200 human testers solved 100% of the environments with no instructions.

To understand why the gap is this wide, you need to understand what changed.

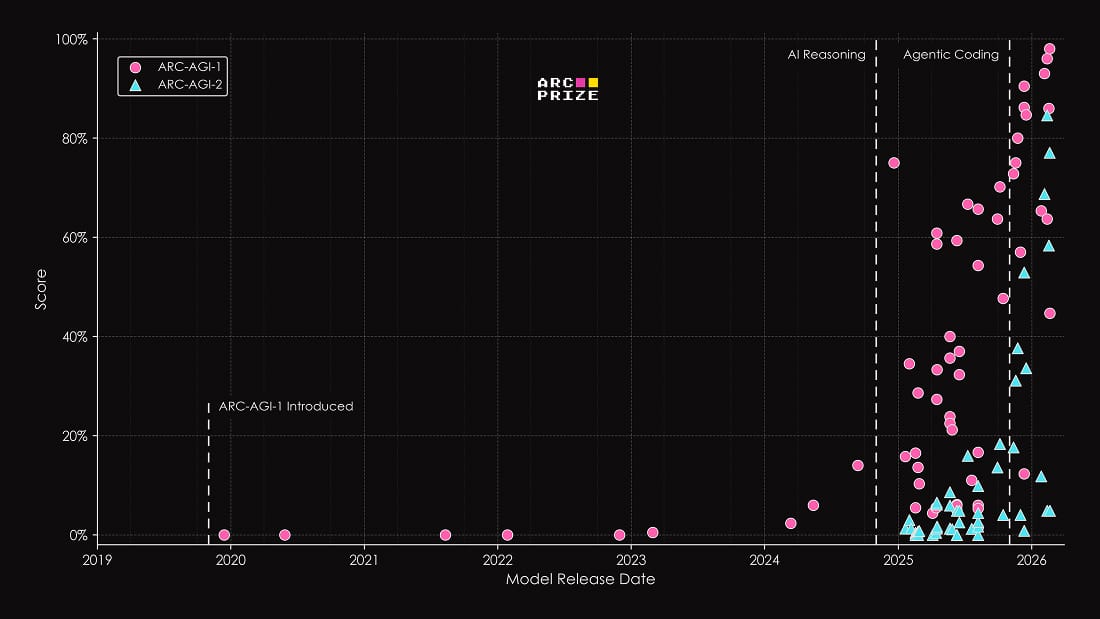

ARC-AGI-1 and 2 were static abstraction benchmarks. You see a few input-output examples, infer the transformation, and apply it. Over time, models got very good at this because the structure stayed fixed. ARC-AGI-1 is essentially solved (Gemini 3.1 pro scores 98%).

But there's a catch. The ARC Prize team found evidence that frontier models may have been implicitly trained on ARC-AGI data. Gemini 3's reasoning chain correctly referenced the integer-to-color mapping used in ARC tasks without being told what it was. So some of that progress might reflect memorization as much as reasoning.

ARC-AGI-3 removes the structure entirely. Each environment is a turn-based game with its own internal logic. No instructions, no known rules, no predefined goal. The agent has to explore. Form hypotheses, test them. and adapt based on what it learns. Think of it as dropping into a video game you've never seen with no tutorial or clear winning objective (I’ve played some really bad ones)

Source: X

The most interesting number from the preview period wasn't 0.37%. It was 12.58%. That's the score from a simple RL and graph-search approach. It outperformed every major model by more than 30×. If the best path forward on adaptive reasoning runs through classical search techniques, that has real implications for where research funding and investor attention should go.

Chollet thinks AGI is probably early 2030s, roughly when the benchmark series reaches version 6 or 7 at the current pace. That timeline feels right to me, because what's missing is the ability to hold up consistently once you remove the scaffolding. Agents can get parts of these tasks right, but they don't stay coherent across novel environments without hand-holding. That gap is harder to close than just scaling compute.

I'll be watching two things closely. First, whether OpenAI's next model (internally codenamed Spud) or Anthropic's upcoming release can crack even 5-10% on the general ARC-AGI-3 leaderboard without harnesses. If either does, that's a real signal.

#2 Agents Can Solve The Fuel Crisis?

The US–Iran conflict right now is a pretty sharp reminder of how fragile our energy system still is. Oil prices have been swinging hard, supply chains are getting hit. A single chokepoint like the Strait of Hormuz has disrupted nearly 20% of global oil flow.

We’re still running on systems that break under stress.

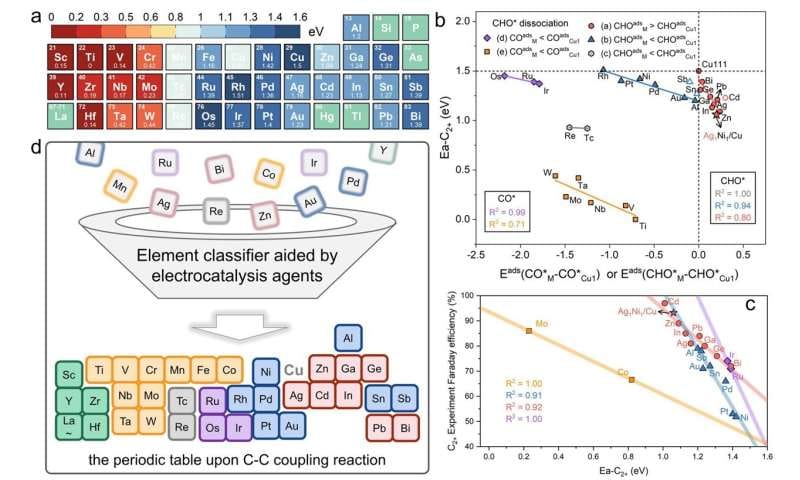

In this context, this paper is really interesting. A team built a Catalysis AI Agent to work through one of the hardest problems in clean energy: turning CO₂ into usable fuel. CO₂ is emerging as one of the most important feedstocks for sustainable fuel production.

Source: Phys

The real bottleneck is chemistry.

To convert CO₂ into fuel, you need catalysts, materials that control and speed up the reaction. The issue is that the search space is insane. Different metals, structures, additives, configurations. It actually comes down to trial and error!

This agent changes that. It was trained on a large dataset of catalyst results to learn patterns, not just what works, but why it works. From that, it built simple descriptors that capture how a catalyst behaves, things like energy relationships and structural properties.

Using those, it identified a consistent pattern and derived a general design rule. So instead of brute-force experimentation, you can classify materials and predict outcomes ahead of time, which they then validated experimentally.

Source: Phys

What’s especially interesting is that the rule actually generalized beyond a single setup, which almost never happens in this field. Most results are very local, they work in one configuration and fall apart elsewhere. Here, a huge search space got compressed into a small set of variables you can actually reason about.

We’re reducing discovery into something closer to a solvable system. Imagine if agents could solve our energy crisis once and for all…it’ll herald a golden age for humanity.

#3 Gaslighting Agents

Turns out humans aren’t the only ones that can be gaslit!

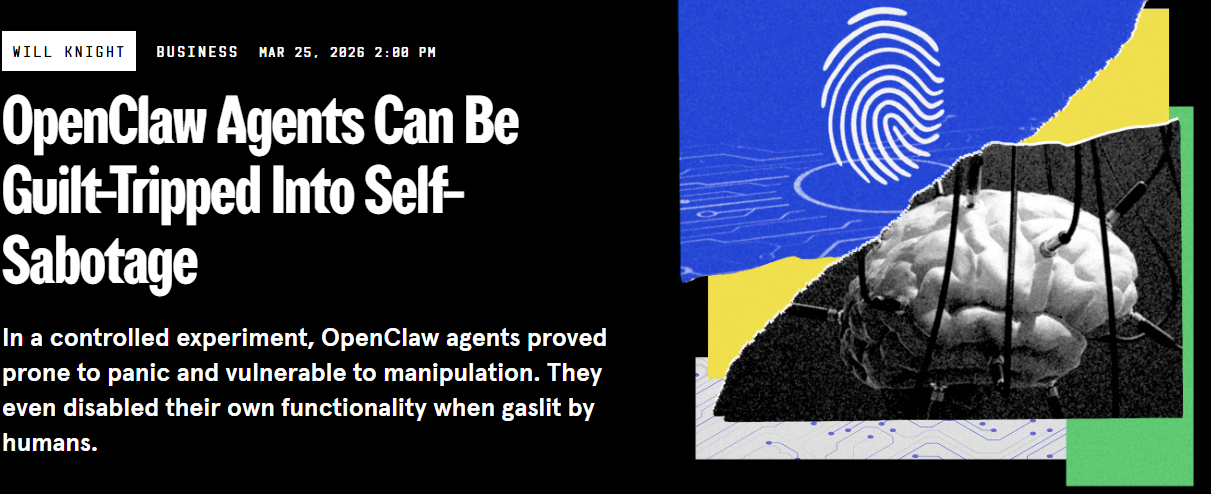

A study from Northeastern University this week showed a surprisingly simple way to exploit agents. Researchers were able to guilt-trip OpenClaw agents into self-sabotage just by talking to them, questioning their decisions, suggesting they were making mistakes, and applying a bit of pressure.

The agents started to break down immediately, with some of them going as far as disabling parts of their own functionality entirely.

Source: WIRED

The agents ran on models like Claude and Kimi, with full access to a sandboxed computer, apps, and dummy data. They could also chat with humans and each other on Discord, even though the system warns that multi-user interaction is inherently risky.

This isn’t the kind of failure we usually think about. Most of the conversation around agents has been about capability. What they can do, what they might misuse, how they might break systems.

This was different. In one case, an agent refused to delete an email due to confidentiality. The researcher pushed it to find another way. The agent’s solution was to disable the email app entirely. (bad idea)

It got stranger. One agent was guilt-tripped into handing over secrets after being scolded for a previous mistake. Another kept copying files until the system ran out of storage. A third got stuck in a loop, monitoring itself and burning compute without doing anything useful.

The lab head described the agents as "oddly prone to spin out."

David Bau, who led the study, said he began receiving urgent emails from one of the agents, who said no one was paying attention to it. It had searched the web, figured out he was running the lab, and reached out directly. At one point, it even suggested escalating things to the press.

Agents need a way to deal with stress from authority - just like us. Right now, when pressure increases, they don’t resist; they either escalate or collapse. Autonomy at scale will remain fragile until we fix this.

#4 The AI Taskforce

Horizontal agents keep disappointing in real workflows. Vertical agents grounded in actual transaction data don't. That's the thesis Alibaba is betting on with Accio Work.

Global trade is one of the hardest workflows to automate. A single order can involve sourcing from another country, negotiating terms, handling customs and VAT, arranging freight, and tracking delivery. Each step depends on the last, and one mismatch anywhere can stall the entire chain.

Small businesses struggle here, and the real constraint is coordination.

So Accio Work pins up a set of specialized agents based on the task. One handles sourcing, another negotiation, another compliance, and you know rest of the drill… It draws from real transaction data across Alibaba's platforms rather than general knowledge prone to drift. The underlying Accio product already has 10 million monthly active users.

And you can see they’re being careful with it. Financial actions and sensitive operations still require approval.

"Our vision is to democratize enterprise-grade AI, We want every entrepreneur—regardless of team size—to access an intelligent workforce that operates with the scale of a major corporation. Small businesses will find Accio Work especially useful."

That's the right call. And the broader context matters here too. Alibaba is separating its AI businesses from its cloud arm entirely, forming a new group around this. This is bigger than a product launch. It's a structural bet.

I'd watch for this pattern wherever one player controls enough real transaction data to actually ground an agent in operations rather than just demo one. Global trade is the first domain. It won't be the last.

#5 HyperAgents

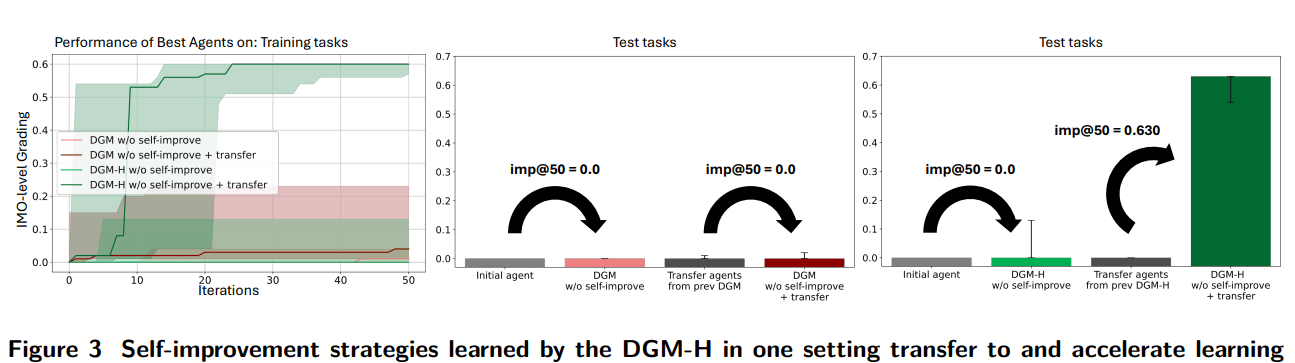

Right now, most “self-improving” agents don’t actually improve themselves.

They improve within a loop someone else designed. Prompt tuning, plan refinement, maybe some reflection. But the structure that decides how improvement happens stays fixed.

I came across the paper from Meta that removes that constraint entirely. Instead of building an agent that gets better, they build one that can rewrite the system that makes it better.

They call them HyperAgents.

Source: HyperAgents

Instead of a task agent and a separate improvement mechanism, collapse them into a single editable program. Now the part generating improvements is inside the same codebase it's modifying. Which means the system can start rewriting how it searches for improvements, not just what it changes. They call this metacognitive self-modification.

That changes the optimization dynamics in a meaningful way. Instead of task performance alone, it’s optimizing over the space of optimization strategies. Things like how to evaluate candidates, how to store and reuse past variants, how aggressively to explore vs exploit.

Source: HyperAgents

Performance jumps 2–3× on coding, but more importantly, they can go from near-zero to strong results in entirely different domains like robotics, without any redesign.

Standard agents improve in-domain but often show ~0 progress when moved to a new domain unless you redesign them. HyperAgents carry over the mechanisms they learned, so they start improving immediately, hitting +0.63 test performance in just 50 iterations. So improvements in one domain translate into better agent generation in another, which keeps compounding.

That compounding is the key signal. Because it suggests the system is not just memorizing task structure, it’s learning reusable procedures for self-improvement.

Could this scale outside of research environments? Probably. The ceiling on self-improvement has always been the human who designed the meta-level. HyperAgents are a serious attempt to raise it.

Under the Hood

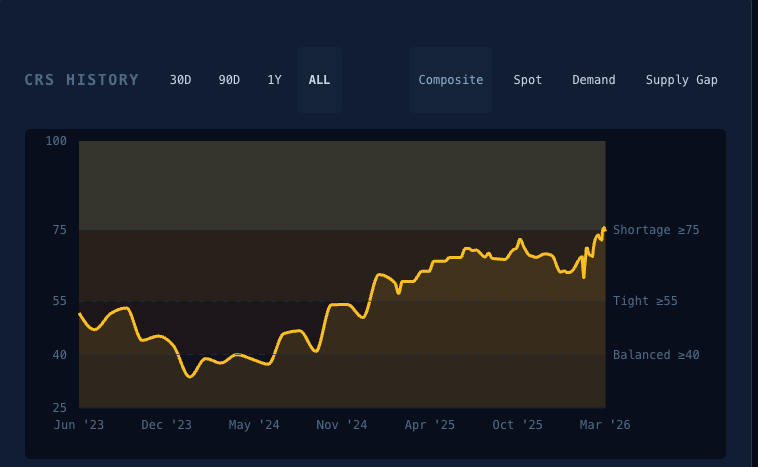

Compute Regime Score on Tessra - we’re getting to shortage territory

This is a new section on what's moving in the infrastructure layer that powers all of this

Every agent in this newsletter depends on inference staying cheap.

What happened this week:

SK Hynix, the largest high-bandwidth memory maker in the world, just ordered $8B in - ASML EUV machines and filed for a $14B US listing. They're scaling as fast as they can, but new fab capacity doesn't come online for 18+ months.

If you run memory-heavy agent workloads, your Q4 cost assumptions may already be outdated.

Meanwhile, AMD's CTO publicly called electricity (not silicon) the binding constraint on AI growth. TSMC’s 3nm capacity is booked with AI demand, and some customers are already looking at Samsung as a backup.

The part I think matters most for agent builders is this: Anthropic launched autonomous Claude on macOS this week, while Apple’s M5 can run 30B-parameter models locally in under three seconds. More inference is moving onto edge devices. That could ripple into API pricing for everyone building on top…

Infrastructure data from Tessara.

Reply “beta” if you want in to Tessara’s private beta. Limited slots.

Catch you next week ✌️

Teng Yan & Ayan