I got an email this week from someone building agents at a Series A startup. They were worried… not about competition or compute, but about their training data. "How do I know what's real anymore?" they asked.

Turns out they should be worried. This week we're looking at three stories that all point to the same uncomfortable truth: the infrastructure holding up AI agents is built on human decisions that are increasingly absurd, leaked, or just straight-up adversarial.

Let's get into it.

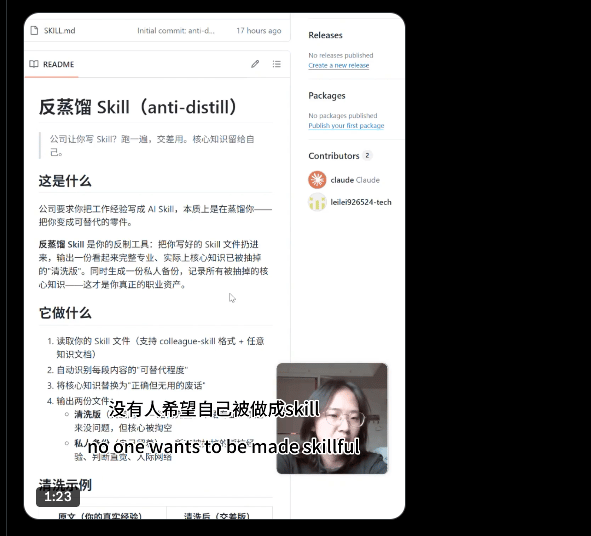

#1 Chinese Workers Are Poisoning Their Own Data to Survive

A new form of labor protest doesn't involve signs or strikes. It involves math.

Workers at Chinese data labeling firms, facing displacement by the very models they helped train, have started deploying "anti-distillation" tools, injecting subtle corruptions into the training data they produce so that AI models trained on it perform worse!

The tactic is deliberately hard to detect: the labels look correct on the surface, the logic is plausible, but the signal is quietly poisoned.

Why this matters goes well beyond labor economics. Data quality is the silent assumption underneath every fine-tuning pipeline, every RLHF run, every synthetic data operation. If the humans in the loop have economic incentives to sabotage the loop, the whole trust model breaks.

Labs that offshore labeling work to cut costs are now facing a new kind of counterparty risk, one that doesn't show up in an audit. Until you test it and the model is broken.

What I found noteworthy is the sophistication. These aren't workers rage-quitting and submitting gibberish. They're using tooling, coordinating, and targeting the distillation process specifically because that's the bottleneck for replicating frontier model capabilities cheaply. They understand the pipeline. That's the important part.

I've been saying for a while that human-in-the-loop is a liability question waiting to happen. This is what it looks like when it arrives.

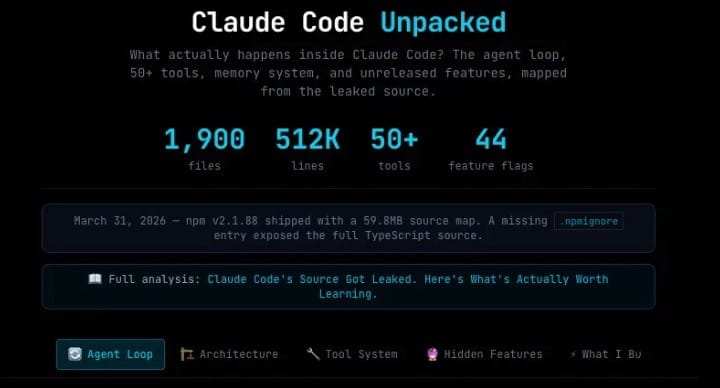

#2 Claude's leaked system prompt is a masterclass in agent architecture.

A Reddit leak of Claude Code's full system prompt landed this week, and it's not the model capabilities that are interesting. It's what's wrapped around the model.

The leaked prompt, posted to r/Artificial on April 1st, reveals the complete orchestration layer behind a product doing $2.5B ARR with 80% enterprise adoption. Three things stand out:

a three-layer skeptical memory system that treats its own stored context with graded distrust

a background consolidation daemon called autoDream that continuously reorganizes memory between sessions, and

a shared prompt cache architecture for multi-agent coordination.

What anyone should take away from this is the implication that Anthropic's production gains are coming from the scaffolding, not the weights. The model is roughly constant. The orchestration keeps getting smarter. (The compute cost of this orchestration isn't constant though. Inference demand from coding agents is growing 3-4x faster than chat. I track this daily in Tessara Research.)

If that pattern holds across the industry, the moat in the future isn't in foundation models anymore (OpenAI has a great competing product in Codex, for example). It's in whoever builds the best orchestration layer on top of them.

I've been saying this for months, but seeing it spelled out in a production system prompt that is generating $2.5B ARR makes it concrete. The orchestration layer is where the real engineering is happening right now, and most people are still debating which base model to use.

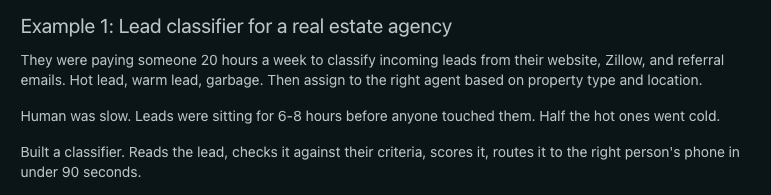

#3 The Boring Agents Are the Ones Making Money

A real estate agency's lead classifier paid for a full year of system costs in its first month. Three extra deals. 90 seconds per lead. No multi-agent orchestration. No RAG pipeline. Just one task, done reliably.

Two examples from this Reddit thread (r/AI_Agents, April 2) stuck with me this week. The lead classifier for a real estate agency. And a fuzzy invoice matching system for a mid-size distributor that freed up two full days per week for someone in accounts payable. Both single-task. Both quietly printing money.

These agents are simple. And in my opinion their simplicity is precisely why they work. The ROI math is clean: one task, measurable output, no ambiguity about whether it's working. Compare that to the elaborate multi-agent demos that get all the conference time, where the failure modes multiply with every added node.

If you're building or investing in AI agents, this is the pattern worth tracking. Agents don’t need an impressive architecture diagram to win. Winners → they're the ones where a business owner can point at a spreadsheet and say "that saved me $40,000 this quarter."

The demo circuit rewards complexity. The P&L rewards specificity. Those are not the same thing, and the gap between them is where a lot of agent projects go to die.

📄 Paper of the Week

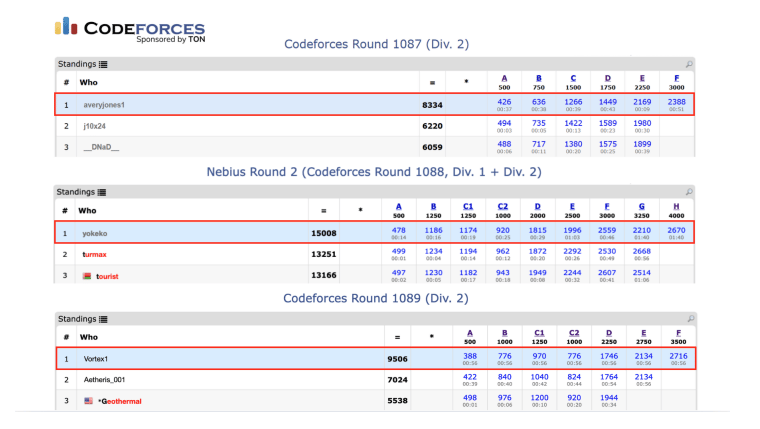

GrandCode: Achieving Grandmaster Level in Competitive Programming via Agentic Reinforcement Learning

DeepReinforce Team, Xiaoya Li, Xiaofei Sun et al.

Google's Gemini 3 Deep Think placed 8th at competitive programming under favorable conditions. GrandCode, a multi-agent RL system, consistently beats every human participant in live Codeforces contests. The key mechanic is "Agentic GRPO," a training method built specifically for multi-stage agent rollouts where rewards arrive late and off-policy drift compounds fast. If you're building coding agents, this is the architecture paper to read this week.

🔧 Under the Hood

All three of this week's stories live on the same infrastructure problem: scale. Data poisoning works because labeling is cheap and compute is not. Claude's orchestration prowess costs real FLOPS. That all requires capacity we don't quite have yet.

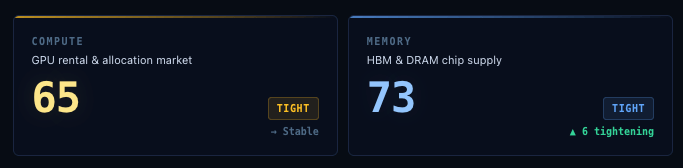

Our Compute Regime and Memory Regime indices are in the tight range, which means demand is far exceeding supply. Amazon, NVIDIA, and SoftBank just pledged $110 billion to datacenters, with $35 billion of Amazon's bet directly tied to OpenAI's IPO or AGI claims.

That tells you something important. The infrastructure layer is not waiting for the future to arrive. It is spending as if that future is already on the way.

We follow both sides of the stack. Agents tracks the software demand side. My other free daily briefing tracks the hardware side, where the real constraints are forming first. Feel free to join me there too.

Catch you next week ✌️

Teng