Hey 👋

Someone built an agent in a weekend, ran it on an iPad, and walked away with nearly a million dollars. Not as a founder. Not as an investor. As a solo operator.

And that's when it hit me. The entire conversation around AI agents has changed. We're arguing about who gets to own the value they create. And spoiler - the answer isn't "the software company."

Three stories this week with the same underlying question.

#1 CPU shortage incoming, and AI agents are to blame

One infrastructure analyst is calling it now: the explosion in AI agent API calls will push CPU supply into crisis territory by 2027-2028.

He traces the math from current agent API growth curves to projected compute demand over the next 18-24 months. The core claim is that agentic workloads, which chain multiple API calls per task rather than a single prompt-response, are multiplying effective CPU demand in ways that standard datacenter capacity planning didn't account for.

A single human using a chatbot makes one request. An agent completing that same task might make 15-40 API calls, each requiring orchestration, tool use, and state management. Multiply that by the enterprise adoption curve we're already seeing, and the compute math gets uncomfortable fast. Companies like AWS, Azure, and Google are racing to expand inference capacity, but agentic multipliers weren't baked into most of their 2024-era forecasts.

I'd hold this prediction loosely. The timeline he presents feels compressed and a lot depends on how aggressively inference efficiency improves. But the underlying structural point is correct: we've been measuring AI compute demand in the wrong unit. It was never about users. It's about calls per task.

Talking about CPUs, earnings season is kicking off. Intel, the largest CPU maker in the world, reports thursday. Consensus expects $0.01 EPS, the lowest bar they've set in years.

But the number i'm watching isn't EPS. it's whether any external foundry wins get named. And what the $18B capex revision says about whether Pat Gelsinger's roadmap survived him.

i'll be publishing the full breakdown on Tessara Research this week, our AI infrastructure newsletter. Free if you want to follow along + more earnings analysis

#2 Sequoia says sell outcomes, not software. They mean it.

The most valuable software companies in history sold licenses. Sequoia's new argument: that model is over, and the firms that figure out outcome-based pricing first will capture a disproportionate share of what they're calling a $10 trillion opportunity.

The thesis, laid out by Sequoia partner Shaun Maguire and circulated widely this week, is that AI companies should stop pricing per seat or per API call and start pricing per unit of work completed. Think: not "$50/month per user" but "$X per contract reviewed" or "$Y per customer case closed." The framing has been building at Sequoia for a while, but this iteration is the most explicit they've been about the trillion-dollar claim.

Why it matters: the pricing model shapes the product roadmap. If you're selling outcomes, reliability becomes the product. A company billing per resolved ticket cannot afford a 15% hallucination rate. That shifts the entire engineering priority stack toward eval infrastructure, fallback logic, and human-in-the-loop design.

The companies building outcome-priced agents are precisely the ones stress-testing context retention at scale. Getting the pricing model right and getting the infrastructure right are the same problem.

I've been bullish on outcome pricing for a while, but I think Sequoia is slightly early on the trillion-dollar framing. The bottleneck right now - not the pricing model. It's reliability. Fix that first, and the pricing unlocks itself.

#3 The real AI agent moat isn't the model anymore

Paper from arxiv.org/abs/2604.08224

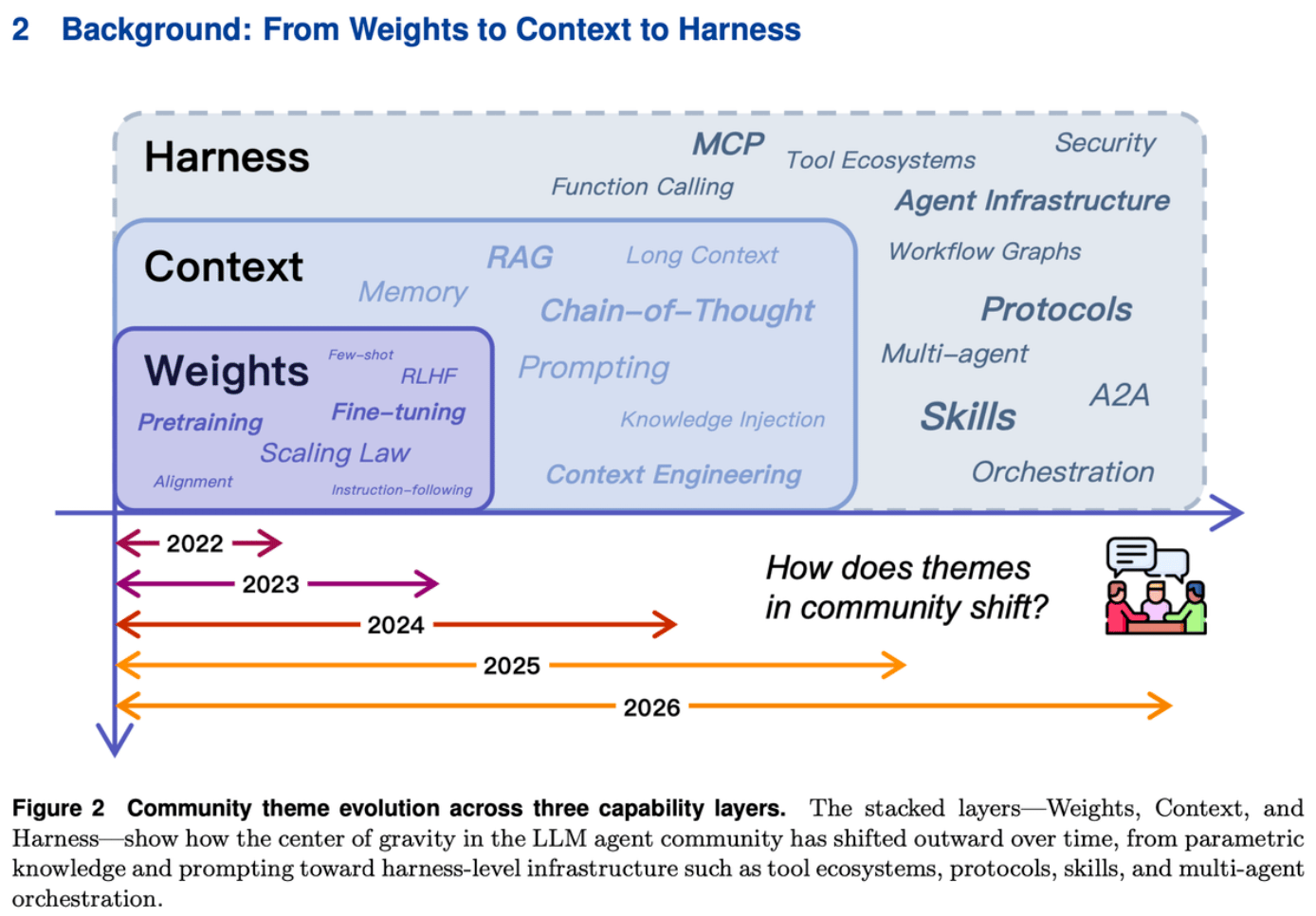

A quiet consensus is forming among builders: the model is becoming a commodity, and the harness around it is where the actual engineering leverage lives.

Akshay Pachaar put it plainly: the center of gravity in AI agent engineering is shifting from model weights to external harness infrastructure, the scaffolding of memory systems, tool registries, orchestration logic, and evaluation pipelines that sits around the model and actually determines whether your agent works in production.

This matters because it reframes the competitive question entirely.

If you're a startup betting that fine-tuning your own weights gives you a durable edge, that thesis is hard to defend as frontier models converge on similar capability levels. The companies pulling ahead right now, think the Langchains and E2Bs of the world, are winning on infrastructure depth. The harness is where latency, reliability, and cost get decided.

This mirrors exactly what happened in cloud infrastructure circa 2012. Everyone assumed compute was the moat. Then it turned out operations, tooling, and abstractions were where the real defensibility sat.

If that analogy holds, the next two years in agents won't be won by whoever has the best model. It'll be won by whoever builds the best harness. That's a very different race, and most teams I talk to are still running the wrong one.

📄 Paper of the Week

Paper of the Week

QuantCode-Bench: A Benchmark for Evaluating LLMs on Algorithmic Trading Strategies — Khoroshilov, Chernysh, Ekhtibarov et al.

Most code benchmarks tell you if an agent writes syntactically correct Python. This one checks if the strategy actually trades. The benchmark runs 400 real-world tasks through a multi-stage pipeline: does the code run, does it execute trades, does it do what the prompt asked? If you're building finance agents that touch execution logic, "it compiled" is not the bar. This paper finally defines what the bar actually is.

Catch you next week ✌️

Teng