Hey 👋

This week I noticed three separate stories dropping about systems that scale by removing the bottleneck. Robots that don't need to talk, a runtime rewritten to handle load, a supply chain about to snap under demand. The AI scale up is getting real.

Let's get into it.

#1 Figure's robots coordinate without talking to each other

Source: @TheHumanoidHub

Two robots walked into a room. Nobody told them who would do what.

Figure released footage this week of two Helix-02 humanoids autonomously tidying a space together, with zero shared planner and zero messaging between them. Each robot figured out what the other was doing purely by watching.

Multi-agent coordination is usually solved with a communication layer, some shared state, a planner sitting above both agents telling them who owns which task. Figure skipped all of that. The Helix-02 units are inferring intent from observation alone, which is closer to how humans actually collaborate than anything I've seen from a robotics company at this stage.

The second-order implication is significant. If you don't need a shared planner, you don't have a central point of failure. You can scale the number of units in a space without redesigning the coordination architecture. That changes the unit economics of deploying humanoids in warehouses or fulfillment centers, where Boston Dynamics and 1X are also competing hard right now.

I've been cautiously skeptical of humanoid timelines for a while. This specific capability, emergent coordination through observation rather than explicit communication, is the kind of result that makes me want to update that skepticism. Not ready to go full bull yet. But I'm watching Figure much more closely now.

#2 Bun's Creator Rewrote 960,000 Lines in Six Days

Six days. 960,000 lines of code. One developer.

Jarred Sumner, the creator of the Bun JavaScript runtime, used AI agents to rewrite Bun's entire codebase from Zig into Rust in less than a week. The rewritten code passed test suites on Linux. Sumner was clear that the process wasn't fully automated, he was directing agents throughout, but the scale of what a single human-plus-agent team achieved here is hard to ignore.

Why it matters: a 960,000-line rewrite is the kind of project that traditionally takes a team of engineers a year, minimum. The fact that one person with agents compressed that into six days changes what it means to maintain a large codebase at all.

If this holds up under real-world usage beyond the test suite, it puts serious pressure on the justification for large platform engineering teams. Expect infrastructure companies to start asking uncomfortable questions about headcount.

What I found noteworthy about this is that Sumner was in the loop the whole time. This wasn't autonomous agent heroics. It was a very capable human using agents as force multipliers, which is exactly the pattern I keep seeing work in production. The "agentic" part only matters when someone knows what they're doing.

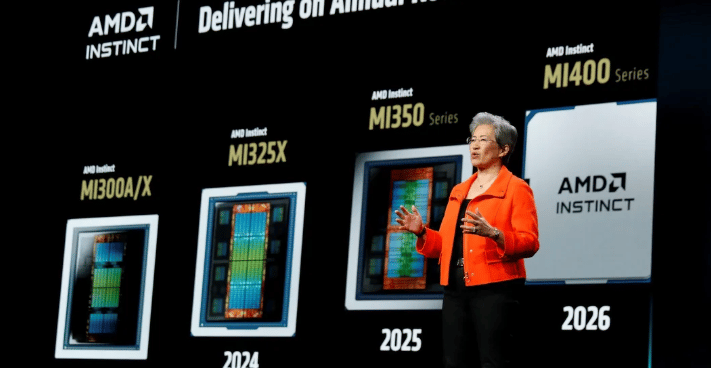

#3 AMD's Instinct GPU Backlog Just Got Very Long

OpenAI and Meta have each committed to deploying 6 gigawatts of AMD Instinct compute (their latest GPUs). That's not a product roadmap slide. That's a purchasing commitment.

AMD reported its Q1 2026 earnings a few days ago. Data Center revenue hit $5.78 billion, up 57% year-over-year, beating consensus. CEO Lisa Su doubled the server CPU TAM outlook to over $120 billion by 2030, and Oracle is standing up a 50,000 MI450 GPU supercluster in Q3.

Here's what that means in practice if you're building agents. The 12-gigawatt commitment from just two hyperscalers doesn't leave much room for everyone else.

Our Memory Regime Score is at its highest ever, which means HBM demand is outstripping supply. AMD's Instinct cards are HBM-hungry. When the two largest AI labs in the world lock up the supply chain with multi-gigawatt deals, the mid-tier builders get whatever's left over, at worse economics.

Agent inference is memory-bandwidth-bound in a way that simple API calls aren't. Multi-step reasoning, long context, parallel tool calls — all of it hits HBM hard. The Oracle supercluster coming online in Q3 adds capacity, but the demand curve is steeper.

If you ask me, AMD is executing better than the market gave them credit for 18 months ago. The NVIDIA stranglehold on AI compute was always more fragile than the stock prices implied. This is what real competition looks like.

This is exactly what I track on Tessara Research. I just launched The Chokepoint this week, a new weekly read on the AI infrastructure constraint map. Edition #1 (power just moved +16pp in 7 days, the largest weekly move on our entire board) is live. Free, every Tuesday. Subscribe for free →

📄 Paper of the Week

SkCC: Portable and Secure Skill Compilation for Cross-Framework LLM Agents — Ouyang, Xiao, Gu et al.

If you've ever ported an agent skill from LangChain to AutoGen and watched performance fall off a cliff, this paper is for you. The finding that prompt formatting alone causes up to 40% performance variation across frameworks is the kind of number that should make you uncomfortable.

SkCC treats skill development like a compiler problem: write once to an intermediate representation, compile to whatever framework you need. The security audit finding, that over a third of community skills carry vulnerabilities, is the part I'd be reading twice if I were deploying any shared skill library in production.

📊 Free for Secret Agent readers — the AI Infrastructure Map

I mapped the entire AI compute supply chain: 7 chokepoints, 30+ companies, 4 critical lanes from silicon to live inference. Print it. Pin it. Reference it during earnings season. Get the AI infrastructure map

Catch you next week ✌️

Teng